Trivial birthday attacks against HMAC

I ran a poll on Twitter, asking "Is HMAC vulnerable to birthday attacks?". There are 34 votes, 26% said "Yes", 27% "I don't care", and 47% "No". Thomas Pornin, a respected crypto engineer, chose no.

In this post I'll describe two trivial birthday attacks against HMAC. The first one is theoretical, but the second one is more practical and security-relevant.

Existential forgery attack

Existential forgery attack

Suppose we have access to a HMAC-HASH signing oracle. HASH can be MD5, SHA1, SHA256, etc. The oracle has a secret key $K$, and when we send it a message $m$, it'll return $\textit{HMAC}(K, m)$.

If we query the oracle for $2^{n/2}$ messages, where $n$ is the length of the output of the hash function, the birthday paradox says that we will likely get a collision. That means we get $m_1 \neq m_2$ such that $\textit{HMAC-HASH}(K, m_1) = \textit{HMAC-HASH}(K, m_2)$.

When this happens, and suppose that $m_1$ and $m_2$ have the same length, we can query the oracle for the tag of $m_1 \;||\; x$ where $x$ is an arbitrary message, and with high probability the returned value is also the tag of $m_2 \;||\; x$.

In other words, if

In other words, if

$\textit{HMAC-HASH}(K, m_1) = \textit{HMAC-HASH}(K, m_2)$

then it's likely that

$\textit{HMAC-HASH}(K, m_1 \;||\; X) = \textit{HMAC-HASH}(K, m_2 \;||\; X)$.

Thus we have successfully forged a signature for $m_2 \;||\; X$ with half of the cost of a serialized brute force search.

Of course this attack is purely theoretical. For example, with HMAC-MD5 the attack requires $2^{64}$ oracle queries, and $2^{71}$ bits of memory. Nevertheless, it's still a proof that HMAC is not safe against birthday attacks.

Two important notes before we consider the second attack:

- Truncating HMAC tags doesn't reduce the cost of the birthday attack. The running time of the attack is still O($2^{n/2}$). On the other hand if the tag is truncated too much, just randomly guessing it might have a decent chance of success (if the tag is $n$ bits, each random n-bit string has $2^{-n}$ chance of being correct).

- A collision in HASH doesn't necessarily lead to a forgery in the HMAC-HASH function. With $m_1$ and $m_2$ such that MD5($m_1$) == MD5($m_2$), it's not guaranteed that $\textit{HMAC-MD5}(K, m_1)$ is equal to $\textit{HMAC-MD5}(K, m_2)$. This is because $K$ already changes the initial state of MD5 by the time it starts processing $m_1$ or $m_2$. All known MD5 or SHA1 collision attacks require knowing the initial state, but $k$ is unknown, thus these attacks won't work against HMAC-MD5 or HMAC-SHA1.

Duplicate signature attack

(Dan Kaminsky wrote about this attack last year: http://dankaminsky.com/2015/05/07/the-little-mac-attack/. See also this thread on Hacker News: https://news.ycombinator.com/item?id=9533984)

Let's take a look at how HMAC is defined:

(Dan Kaminsky wrote about this attack last year: http://dankaminsky.com/2015/05/07/the-little-mac-attack/. See also this thread on Hacker News: https://news.ycombinator.com/item?id=9533984)

Let's take a look at how HMAC is defined:

$\textit{HMAC-HASH}(K, m) = \textit{HASH} \Bigl( (K \oplus opad) \;||\; \textit{HASH} \bigl( (K \oplus ipad) \;||\; m \bigr) \Bigr)$,

where opad and ipad are padding constants. If we have a collision in the inner hash $\textit{HASH} \bigl( (K \oplus ipad) \;||\; m \bigr)$, we are guaranteed to have a collision in the outer hash and the whole HMAC-HASH function. That means if we know $K$, and we can find $m_1$ and $m_2$ such that $\textit{HASH} \bigl( (K \oplus ipad) \;||\; m_1 \bigr) = \textit{HASH} \bigl( (K \oplus ipad) \;||\; m_2 \bigr)$, we will obtain $\textit{HMAC-HASH}(K, m_1) = \textit{HMAC-HASH}(K, m_2)$.

This is not a forgery attack, because the attacker must know the key. It is called duplicate signature attack in the literature, as it produces two messages having the same tag. It is more security-relevant that the first attack.

I once looked at a server that:

- accepts a signed command from the requester

- verifies the signature

- validates the command

- finally executes it.

Because command validation is expensive, once the command is validated, its signature is added to a cache. For every new command the system just needs to verify the signature, and checks if it is in the cache, and if so, runs it, without having to validate it again. This system is thus vulnerable to the duplicate signature attack. An adversary can generate two commands having the same signature, one of them is benign, and the other malicious. They send the benign command first so that its signature is added to the cache. Then they send the malicious command, which bypasses the validation because its signature is already in the cache. The root cause of this bug is that HMAC is a pseudorandom function (PRF), it should not be used as a pseudorandom permutation (PRP) (more on PRP vs PRF).

This second attack is much easier to exploit than the first one. We just need to generate a collision in the hash function, and we can do that offline (without having to query any oracle). Again the birthday paradox tells us that we need to generate only $2^{n/2}$ messages before hitting a collision. The problem is the memory cost. Storing $2^{n/2}$ messages is a big deal. Fortunately there is an algorithm that reduces not only the memory cost, but also the running time. This algorithm is described in the classic paper "Parallel collision search with cryptanalytic applications" by van Oorschot and Wiener.

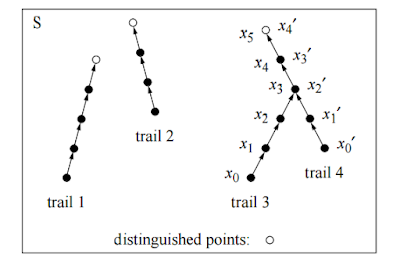

The core idea is similar to the mining process in Bitcoin. Each CPU independently searches for a "distinguished point", e.g., the first 32 bits are all zero, by iterating a function $f$ and producing a trail. When a distinguished point is found, the CPU reports a triple consisting of the starting point, the number of iterations, and the distinguished point to a centralized server. The CPU then picks another starting point, and searches for the next distinguished point. As there is no communication or synchronization between the CPUs, the algorithm is perfectly parallelized, which in turn vastly reduces the running time. After the CPUs have run for a while, the central server will sort the distinguished points and find collisions. If it founds a collision, that means two trails produced by two CPUs collided and merged with each other at some point. Right before the trail collision, we will find two values $a$ and $b$ such that $f(a) = f(b)$.

Suppose that we are attacking HMAC-SHA1.

Suppose that we have a machine with $2^{40}$ SHA1 circuits, and we feed each of them a random starting point, and let them work in parallel to find distinguished points which start with 40 zero bits. The machine can compute $2^{80}$ hashes in time of only $2^{40}$ SHA1 computations. See "Understanding brute force" by Dan Bernstein for how such a machine can be built.

The central server has to store the distinguished points, thus it needs some memory. Let's say that it has 128 GB of memory. It has to store many aforementioned triples. Because we're going to produce $2^{40}$ trails, the starting point can be represented with 5 bytes. The distinguished point can be represented with 16 bytes because a SHA1 hash value is 20 bytes long, and distinguished points have at least 4 leading zero bytes which need not be stored. The number of steps can be represented with 6 bytes, because $2^{48}$ is the maximum number of iterations before we abandon a trail (e.g., it has a cycle). Overall, a triple requires 27 bytes. Thus with 128 GB of memory we can store around $4,74 * 10^9$ said triples.

Since it takes on average $2^{40}$ iterations before a trail reaches a distinguished point, by the time the memory on the central server is nearly full, we have computed on average $2^{40} * 4,92 * 10^9 \approx 2^{72}$ SHA1 hashes. This should give us (approximately) a $2^{-17}$ chance of having a collision. If we run the machine again and again, each run takes slightly more time than $2^{40}$ SHA1 computations, we'll increase the chance of hitting a collision to pretty close to 1.

As noted by van Oorschot and Wiener, the run time of this attack is proportional to the square root of the hash result space. Thus if this attack were applied to SHA256, it would be $2^{48}$ times slower.

Three conclusions:

- There are birthday attacks that work well against HMAC.

- Birthday attacks can usually be parallelized.

- Use HMAC-SHA256 when you can.

I once looked at a server that:

- accepts a signed command from the requester

- verifies the signature

- validates the command

- finally executes it.

Because command validation is expensive, once the command is validated, its signature is added to a cache. For every new command the system just needs to verify the signature, and checks if it is in the cache, and if so, runs it, without having to validate it again. This system is thus vulnerable to the duplicate signature attack. An adversary can generate two commands having the same signature, one of them is benign, and the other malicious. They send the benign command first so that its signature is added to the cache. Then they send the malicious command, which bypasses the validation because its signature is already in the cache. The root cause of this bug is that HMAC is a pseudorandom function (PRF), it should not be used as a pseudorandom permutation (PRP) (more on PRP vs PRF).

This second attack is much easier to exploit than the first one. We just need to generate a collision in the hash function, and we can do that offline (without having to query any oracle). Again the birthday paradox tells us that we need to generate only $2^{n/2}$ messages before hitting a collision. The problem is the memory cost. Storing $2^{n/2}$ messages is a big deal. Fortunately there is an algorithm that reduces not only the memory cost, but also the running time. This algorithm is described in the classic paper "Parallel collision search with cryptanalytic applications" by van Oorschot and Wiener.

The core idea is similar to the mining process in Bitcoin. Each CPU independently searches for a "distinguished point", e.g., the first 32 bits are all zero, by iterating a function $f$ and producing a trail. When a distinguished point is found, the CPU reports a triple consisting of the starting point, the number of iterations, and the distinguished point to a centralized server. The CPU then picks another starting point, and searches for the next distinguished point. As there is no communication or synchronization between the CPUs, the algorithm is perfectly parallelized, which in turn vastly reduces the running time. After the CPUs have run for a while, the central server will sort the distinguished points and find collisions. If it founds a collision, that means two trails produced by two CPUs collided and merged with each other at some point. Right before the trail collision, we will find two values $a$ and $b$ such that $f(a) = f(b)$.

|

| $f(x_2) = f(x_{1'})$. Picture stolen from "Parallel collision search with cryptanalytic applications". |

Suppose that we have a machine with $2^{40}$ SHA1 circuits, and we feed each of them a random starting point, and let them work in parallel to find distinguished points which start with 40 zero bits. The machine can compute $2^{80}$ hashes in time of only $2^{40}$ SHA1 computations. See "Understanding brute force" by Dan Bernstein for how such a machine can be built.

The central server has to store the distinguished points, thus it needs some memory. Let's say that it has 128 GB of memory. It has to store many aforementioned triples. Because we're going to produce $2^{40}$ trails, the starting point can be represented with 5 bytes. The distinguished point can be represented with 16 bytes because a SHA1 hash value is 20 bytes long, and distinguished points have at least 4 leading zero bytes which need not be stored. The number of steps can be represented with 6 bytes, because $2^{48}$ is the maximum number of iterations before we abandon a trail (e.g., it has a cycle). Overall, a triple requires 27 bytes. Thus with 128 GB of memory we can store around $4,74 * 10^9$ said triples.

Since it takes on average $2^{40}$ iterations before a trail reaches a distinguished point, by the time the memory on the central server is nearly full, we have computed on average $2^{40} * 4,92 * 10^9 \approx 2^{72}$ SHA1 hashes. This should give us (approximately) a $2^{-17}$ chance of having a collision. If we run the machine again and again, each run takes slightly more time than $2^{40}$ SHA1 computations, we'll increase the chance of hitting a collision to pretty close to 1.

As noted by van Oorschot and Wiener, the run time of this attack is proportional to the square root of the hash result space. Thus if this attack were applied to SHA256, it would be $2^{48}$ times slower.

Three conclusions:

- There are birthday attacks that work well against HMAC.

- Birthday attacks can usually be parallelized.

- Use HMAC-SHA256 when you can.

Comments